The Royal Institution of Chartered Surveyors (RICS) has fundamentally transformed how artificial intelligence can be deployed in property surveying: as of March 9, 2026, every RICS member and regulated firm worldwide must comply with the profession's first-ever mandatory global standard on responsible AI use.[4] This watershed moment arrives as AI-powered defect detection, thermal imaging analysis, and automated report generation tools proliferate across the industry, creating both unprecedented efficiency gains and significant professional liability risks. Implementing RICS Professional Standard on Responsible AI Use in Building Surveys: Practical Protocols for 2026 Practice requires surveyors to navigate complex governance frameworks, establish accountability protocols, and maintain professional judgment while harnessing AI's analytical power.

The standard addresses a critical vulnerability: automation bias—the dangerous assumption that computer-generated outputs must be accurate simply because they're machine-produced.[4] For building surveyors conducting structural surveys or commercial building assessments, this bias could lead to missed defects, incorrect valuations, and professional negligence claims.

Key Takeaways

✅ Mandatory Compliance: All RICS members must implement the AI standard by March 9, 2026, with documented governance assessments required before deploying any AI system with material impact on service delivery

🎯 Material Impact Determination: Surveyors must evaluate whether AI outputs influence service delivery, such as defect identification or opinion composition, and document their reasoning for each system

📋 Risk Register Requirement: Firms using AI must maintain comprehensive risk registers addressing inherent bias, erroneous outputs, and accountability gaps

👤 Named Surveyor Accountability: Every AI-assisted decision requires a named, qualified surveyor to accept responsibility for output reliability and professional advice

🔍 Professional Judgment Paramount: AI tools assist but never replace professional expertise—surveyors remain fully accountable regardless of technological assistance

Understanding the RICS AI Standard: Scope and Mandatory Requirements

The RICS professional standard establishes a comprehensive framework spanning eight critical areas that govern how chartered surveyors integrate artificial intelligence into their practice.[2] Unlike voluntary guidance, this standard carries mandatory force, creating enforceable obligations for all RICS-regulated firms and individual members globally.

The Eight Compliance Pillars

The standard's architecture addresses the complete lifecycle of AI implementation in surveying practice:

- Knowledge Requirements: Surveyors must understand AI capabilities, limitations, and potential biases before deployment

- Practice Management: Firms must establish governance structures for AI oversight and decision-making

- Data Governance: Protocols for data quality, security, and privacy protection

- System Governance: Pre-implementation assessments and ongoing monitoring frameworks

- AI Procurement: Due diligence requirements when acquiring third-party AI solutions

- Output Reliability: Validation protocols ensuring AI-generated information meets professional standards

- Client Communication: Transparency requirements about AI use in service delivery

- Proprietary Development: Additional obligations for firms creating custom AI systems[2]

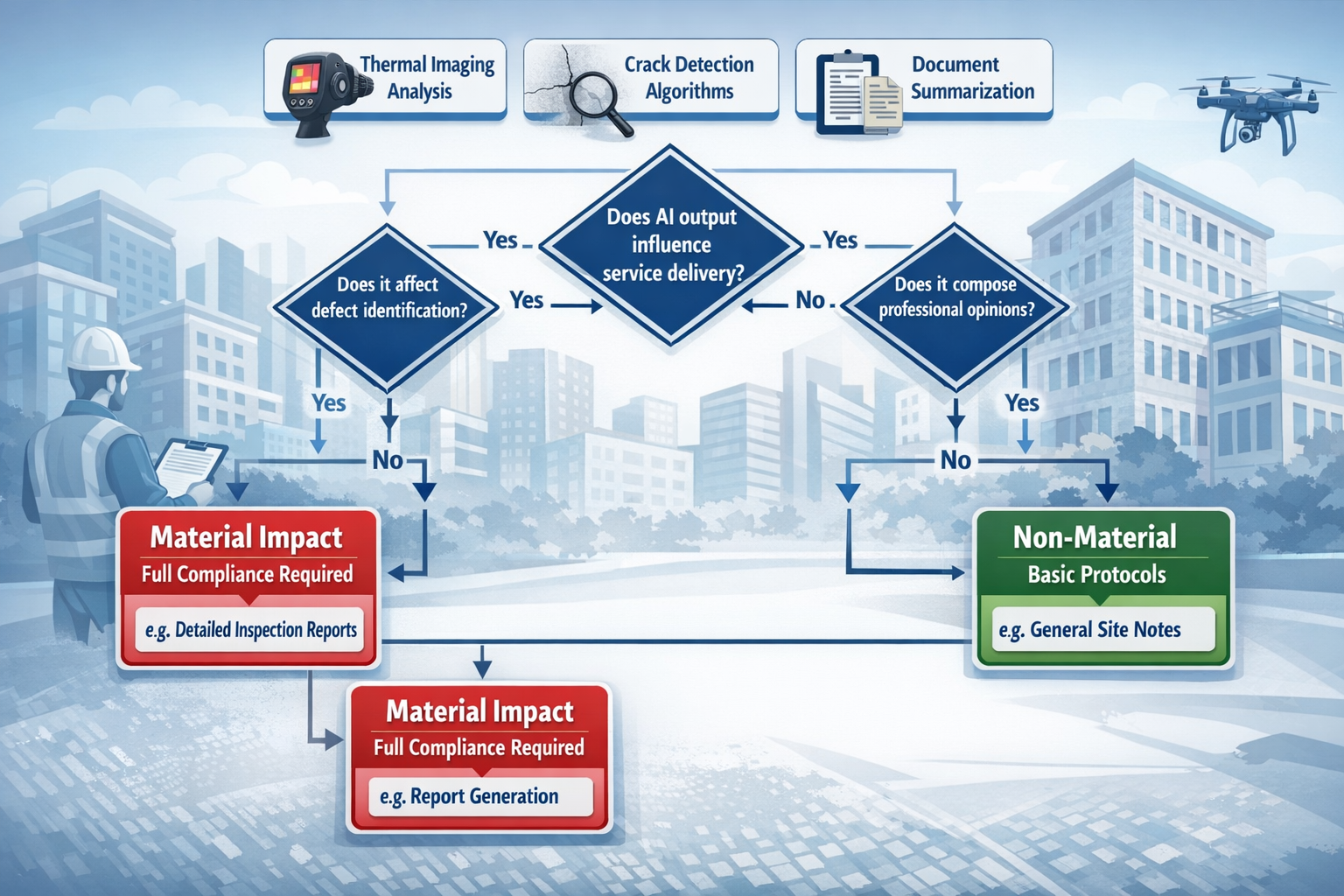

Material Impact: The Central Compliance Trigger

The concept of "material impact" determines whether full compliance protocols apply to a specific AI application. Material impact occurs when AI outputs are capable of influencing service delivery in meaningful ways.[1] For building surveyors, this includes:

- Defect Identification Systems: AI algorithms analyzing photographs, thermal images, or drone roof survey data to identify structural issues

- Opinion Composition: AI tools that draft or suggest professional opinions about building condition

- Document Summarization: Systems that extract and synthesize information from technical reports, potentially affecting survey conclusions

- Measurement and Calculation: Automated systems determining dimensions, areas, or structural load assessments

Crucially, surveyors cannot simply assume an AI tool lacks material impact. The standard requires documented determination and reasoning for each system, creating an audit trail demonstrating compliance.[1]

"The standard applies only to AI outputs with material impact on surveying service delivery—outputs capable of influencing service delivery. Members must document this determination and their reasoning."[1]

Non-Material AI Applications

Not all AI use triggers full compliance requirements. Administrative tools with minimal influence on professional output—such as scheduling software, basic document storage systems, or email filtering—typically fall outside the material impact threshold. However, surveyors must still document why they've classified these systems as non-material.[3]

Implementing RICS Professional Standard on Responsible AI Use in Building Surveys: Pre-Deployment Protocols

Before deploying any AI system with material impact, RICS-regulated firms must complete structured governance assessments and establish foundational compliance infrastructure. These pre-deployment protocols form the bedrock of responsible AI implementation.

Mandatory System Governance Assessments

The standard requires written system governance assessments before AI deployment, documenting how the firm will manage, monitor, and control the technology.[2] For a building surveyor considering AI-powered defect detection software for homebuyer reports, this assessment must address:

Technical Evaluation Components:

- Algorithm transparency and explainability

- Training data sources and potential biases

- Accuracy rates and error patterns

- Integration with existing workflows

- Data security and privacy protections

Operational Governance Elements:

- Named individuals responsible for system oversight

- Decision-making authority for AI output acceptance or rejection

- Escalation procedures when AI produces uncertain results

- Documentation requirements for AI-assisted decisions

- Quality assurance protocols

Creating Comprehensive Risk Registers

Risk registers represent a mandatory compliance requirement for firms using AI with material impact.[3] These living documents must identify, assess, and document mitigation strategies for AI-related risks specific to building surveying practice.

Critical Risks to Document:

| Risk Category | Specific Examples | Mitigation Strategies |

|---|---|---|

| Inherent Bias | AI trained predominantly on modern construction may misidentify heritage building defects | Validate AI outputs against traditional inspection; maintain human oversight for pre-1950 properties |

| Erroneous Outputs | Thermal imaging AI misclassifying condensation as structural damp | Require physical verification of all AI-flagged defects before client reporting |

| Data Quality Issues | Poor photograph quality leading to missed crack identification | Establish minimum image resolution standards; implement multi-angle capture protocols |

| Accountability Gaps | Unclear responsibility when AI and surveyor disagree | Named surveyor final authority protocol; documented override reasoning required |

| Client Misunderstanding | Clients assuming AI guarantees complete defect detection | Mandatory client communication about AI limitations and professional judgment role |

The risk register must be regularly reviewed and updated as new risks emerge or mitigation strategies prove effective or inadequate.[3]

Developing Responsible AI Use Policies

Every RICS-regulated firm using or intending to use AI systems must develop and implement responsible AI use policies.[1] These policies translate the RICS standard's principles into firm-specific operational procedures.

Essential Policy Components:

📌 Purpose and Scope: Clear statement of which AI systems are covered and why the policy exists

📌 Governance Structure: Named roles and responsibilities for AI oversight, including senior management accountability

📌 Material Impact Assessment Process: Step-by-step procedures for determining whether new AI tools require full compliance protocols

📌 Pre-Deployment Requirements: Checklist of mandatory assessments, approvals, and documentation before AI system activation

📌 Output Validation Protocols: Specific procedures for verifying AI-generated information before incorporation into professional advice

📌 Client Communication Standards: Templates and requirements for disclosing AI use to clients transparently

📌 Training Requirements: Mandatory competency standards for staff using AI systems

📌 Incident Response: Procedures when AI errors are discovered or suspected

📌 Review and Update Schedule: Regular policy review frequency (minimum annually recommended)

These policies must cover both internally-developed and external AI systems, recognizing that third-party software still creates firm-level accountability.[1]

Due Diligence for AI Procurement

When acquiring third-party AI solutions, surveyors must conduct thorough due diligence extending beyond typical software evaluation. The RICS standard implicitly requires understanding how external AI systems function, their limitations, and their risk profiles.[2]

Procurement Due Diligence Checklist:

✓ Request transparency about training data sources and potential biases

✓ Obtain documentation of accuracy rates and known error patterns

✓ Verify data security certifications and privacy compliance

✓ Understand system limitations and scenarios where it may fail

✓ Confirm vendor support for output validation and quality assurance

✓ Review contractual liability provisions for AI-related errors

✓ Assess compatibility with RICS compliance documentation requirements

✓ Evaluate vendor's commitment to ongoing system improvement and bias mitigation

For RICS registered valuers considering AI valuation tools, this due diligence becomes particularly critical given the financial implications of valuation errors.

Operational Protocols for AI-Assisted Building Surveys in 2026 Practice

Once pre-deployment requirements are satisfied, surveyors must implement rigorous operational protocols ensuring AI enhances rather than compromises professional service quality. These day-to-day practices embed the RICS standard's principles into actual survey work.

The Named Surveyor Accountability Model

The RICS standard establishes an unambiguous accountability framework: written decisions about AI output reliability must be prepared by or supervised by an appropriately qualified and named surveyor who accepts responsibility for the output's use.[3] This requirement eliminates any ambiguity about professional liability when AI tools are involved.

Practical Implementation:

For a surveyor conducting a specific defect investigation using AI-enhanced thermal imaging analysis:

- AI Output Generation: Thermal imaging AI flags potential moisture intrusion in external wall cavity

- Named Surveyor Review: Qualified surveyor (name documented) reviews AI analysis against physical inspection observations

- Professional Judgment Application: Surveyor considers building age, construction type, weather conditions, and alternative explanations

- Decision Documentation: Written record stating whether AI output is accepted, modified, or rejected, with reasoning

- Report Integration: If accepted, AI findings incorporated into survey report with surveyor's professional endorsement

- Accountability Assignment: Named surveyor's responsibility explicitly stated in file documentation

This model ensures that professional judgment remains paramount and automation bias cannot shift responsibility to the technology.[4]

Output Reliability Validation Protocols

Before incorporating AI-generated information into professional advice, surveyors must validate output reliability through structured protocols. The specific validation approach depends on the AI application and potential consequences of errors.

Validation Framework for Common Building Survey AI Applications:

Defect Detection AI (High Risk):

- Mandatory physical verification of all AI-identified defects

- Cross-reference with historical building records

- Consider false negative risk (missed defects)

- Document validation methodology in survey file

Measurement and Calculation AI (Medium-High Risk):

- Spot-check minimum 20% of AI measurements manually

- Verify critical measurements (structural elements) at 100%

- Compare AI calculations against manual methods for complex scenarios

- Maintain calculation worksheets showing validation

Document Summarization AI (Medium Risk):

- Review original documents for accuracy of AI summary

- Verify no critical information omitted

- Check for context misinterpretation

- Maintain both AI summary and source documents

Report Drafting Assistance AI (Medium Risk):

- Line-by-line review of AI-generated text

- Verify technical accuracy of all statements

- Ensure appropriate qualifications and limitations included

- Confirm consistency with inspection findings

For subsidence surveys where AI might analyze crack patterns, validation becomes particularly critical given the serious structural and insurance implications.

Client Communication and Transparency Requirements

The RICS standard requires appropriate transparency with clients about AI use in service delivery.[2] This transparency serves multiple purposes: managing client expectations, ensuring informed consent, and protecting professional reputation.

Effective Client Communication Strategies:

In Initial Engagement:

- Disclose AI tools used in survey process

- Explain how AI assists (not replaces) professional judgment

- Clarify that surveyor remains fully accountable for all advice

- Address any client concerns about AI reliability

In Survey Reports:

- Note where AI tools contributed to findings

- Explain validation procedures applied

- Maintain professional tone emphasizing surveyor expertise

- Avoid over-reliance on AI terminology that might confuse clients

Sample Report Language:

"This survey incorporated AI-enhanced thermal imaging analysis to assist in identifying potential moisture issues. All AI-flagged areas were physically inspected and verified by the surveyor. The professional opinions and recommendations in this report reflect the surveyor's expert judgment based on comprehensive inspection and analysis."

This approach satisfies transparency requirements while maintaining client confidence in professional expertise. For commercial building surveys where clients may be sophisticated property investors, more detailed AI disclosure may be appropriate.

Handling AI-Human Disagreements

A critical operational challenge arises when AI outputs conflict with surveyor observations or professional judgment. The RICS standard's accountability framework provides clear resolution: the named surveyor's professional judgment prevails, but the disagreement must be documented.[3]

Protocol for AI-Human Conflicts:

- Document the Disagreement: Record what AI suggested versus surveyor's assessment

- Analyze the Discrepancy: Investigate why AI and human reached different conclusions

- Apply Professional Judgment: Surveyor makes final determination based on expertise, context, and risk assessment

- Record Reasoning: Document why surveyor's judgment differed from AI output

- Consider System Improvement: Report systematic AI errors to vendor or internal development team

- Update Risk Register: If pattern emerges, update risk register and mitigation strategies

This protocol prevents automation bias while capturing valuable information about AI system limitations that can inform future improvements.

Implementing RICS Professional Standard on Responsible AI Use in Building Surveys: Ongoing Monitoring and Quality Assurance

Compliance with the RICS AI standard is not a one-time achievement but an ongoing operational commitment. Firms must establish continuous monitoring and quality assurance processes.

Ongoing Compliance Activities:

🔄 Quarterly AI System Reviews: Assess accuracy rates, error patterns, and user feedback

🔄 Annual Policy Updates: Review and revise responsible AI use policies based on experience and emerging risks

🔄 Regular Training: Ensure all staff using AI systems maintain competency and understand limitations

🔄 Risk Register Updates: Add newly identified risks and evaluate mitigation effectiveness

🔄 Client Feedback Analysis: Monitor client concerns or questions about AI use

🔄 Incident Investigation: Thoroughly investigate any AI-related errors or near-misses

🔄 Compliance Audits: Conduct internal audits verifying documentation requirements are met

For firms offering RICS valuations across multiple property types, these ongoing activities ensure consistent compliance across all service lines.

Special Considerations for Proprietary AI Development

Firms developing their own AI systems face additional obligations under the RICS standard, reflecting the heightened responsibility that comes with creating rather than merely using AI technology.[2]

Sustainability Impact Assessments

A distinctive requirement for proprietary AI development is written sustainability impact assessments of proposed systems.[3] This obligation extends environmental responsibility into the digital realm, recognizing that AI systems consume significant computational resources and energy.

Sustainability Assessment Components:

- Energy Consumption: Estimated computational power required for training and operation

- Carbon Footprint: Environmental impact of system infrastructure

- Resource Efficiency: Comparison with alternative approaches or existing solutions

- Longevity Planning: Expected system lifespan and disposal/decommissioning considerations

- Benefit-Impact Ratio: Whether efficiency gains justify environmental costs

This requirement aligns with broader RICS commitments to sustainability and encourages firms to consider environmental implications alongside technical capabilities.

Enhanced Documentation Requirements

Proprietary AI development demands comprehensive documentation covering:

- Development Methodology: How algorithms were designed and training data selected

- Bias Testing: Systematic evaluation of potential biases and mitigation strategies

- Validation Studies: Testing protocols and accuracy assessments before deployment

- Version Control: Documentation of system updates and performance changes

- Maintenance Plans: Ongoing monitoring and improvement commitments

Ethical Considerations in Custom AI Design

Firms developing AI for building surveys must embed ethical principles into system design:

Fairness: Ensure AI doesn't systematically disadvantage certain property types, construction eras, or geographic areas

Transparency: Design systems that can explain their reasoning, not just provide outputs

Privacy: Protect sensitive property and client information throughout AI processing

Safety: Prioritize conservative outputs where uncertainty exists, particularly for structural safety issues

Risk Mitigation Strategies for AI-Assisted Building Surveys

Beyond mandatory compliance requirements, prudent surveyors should implement additional risk mitigation strategies protecting both clients and professional reputation.

Professional Indemnity Insurance Considerations

The introduction of AI into surveying practice has significant insurance implications. Surveyors should:

- Notify Insurers: Inform professional indemnity insurers about AI use before deployment

- Verify Coverage: Confirm that AI-assisted work remains covered under existing policies

- Document Compliance: Maintain evidence of RICS standard compliance to support insurance claims if needed

- Consider Additional Coverage: Evaluate whether cyber liability or technology errors and omissions coverage is appropriate

Building AI Competency Within Survey Teams

Successful AI implementation requires staff competency extending beyond traditional surveying skills:

Essential AI Competencies:

- Understanding AI capabilities and limitations

- Recognizing potential biases and errors

- Validating AI outputs effectively

- Documenting AI-assisted decisions appropriately

- Communicating AI use to clients clearly

Firms should invest in formal training programs ensuring all staff using AI systems achieve these competencies before deployment.

Establishing AI Ethics Committees

Larger surveying firms may benefit from establishing AI ethics committees providing governance oversight for AI implementation decisions. These committees can:

- Review material impact determinations for new AI systems

- Assess compliance with RICS standard requirements

- Evaluate ethical implications of AI applications

- Investigate AI-related incidents or concerns

- Recommend policy updates based on emerging issues

Maintaining Traditional Skills Alongside AI Capabilities

A critical risk mitigation strategy involves ensuring surveyors maintain traditional inspection and analysis skills even as AI tools become prevalent. Over-reliance on AI could lead to skill atrophy, creating vulnerability when AI systems fail or produce unreliable outputs.

Skill Maintenance Strategies:

- Regular manual inspection exercises without AI assistance

- Continuing professional development in traditional techniques

- Mentoring programs pairing experienced surveyors with junior staff

- Periodic "AI-free" surveys to maintain competency

This approach ensures surveyors can confidently exercise professional judgment independently of technological assistance, fulfilling the RICS standard's emphasis on professional accountability.[4]

Future-Proofing AI Compliance in Building Survey Practice

As AI technology evolves rapidly, surveyors must adopt forward-looking approaches ensuring continued compliance and professional excellence.

Monitoring Regulatory Developments

The RICS AI standard represents the profession's current framework, but regulatory evolution is inevitable. Surveyors should:

- Monitor RICS guidance updates and clarifications

- Track broader AI regulation developments (UK AI regulation, EU AI Act implications)

- Participate in professional forums discussing AI implementation experiences

- Engage with RICS consultations on AI-related policy development

Embracing Continuous Improvement Culture

Rather than viewing RICS compliance as a checkbox exercise, leading firms should embrace continuous improvement in AI implementation:

- Learn from Experience: Systematically capture lessons from AI successes and failures

- Share Knowledge: Contribute to professional community understanding of effective AI practices

- Innovate Responsibly: Explore new AI applications while maintaining rigorous governance

- Challenge Assumptions: Regularly question whether AI systems remain fit for purpose

Balancing Innovation and Professional Standards

The tension between technological innovation and professional standards will persist. Successful surveyors will navigate this balance by:

✅ Embracing AI's Potential: Recognizing legitimate efficiency and accuracy improvements AI can deliver

✅ Maintaining Professional Skepticism: Questioning AI outputs and avoiding automation bias

✅ Prioritizing Client Service: Ensuring AI enhances rather than compromises service quality

✅ Upholding Professional Values: Remembering that technology serves professional practice, not vice versa

For surveyors conducting dilapidation surveys or other specialized work, this balance ensures AI adoption enhances rather than undermines professional expertise.

Conclusion

Implementing RICS Professional Standard on Responsible AI Use in Building Surveys: Practical Protocols for 2026 Practice represents both a compliance obligation and a professional opportunity. The mandatory standard, effective March 9, 2026, establishes clear expectations for how surveyors integrate AI tools into their practice while maintaining professional accountability and service quality.

The core principles are straightforward: determine material impact, conduct governance assessments, maintain risk registers, establish named surveyor accountability, validate outputs rigorously, communicate transparently with clients, and recognize that professional judgment remains paramount regardless of technological assistance. These requirements protect clients, maintain professional standards, and ensure AI enhances rather than compromises surveying practice.

Actionable Next Steps for Surveyors

Immediate Actions (Before March 9, 2026):

- Audit Current AI Use: Identify all AI systems currently used in your practice

- Assess Material Impact: Document whether each system has material impact on service delivery

- Conduct Governance Assessments: Complete written assessments for material impact systems

- Create Risk Registers: Document AI-related risks and mitigation strategies

- Develop Responsible AI Policy: Draft and implement firm-wide AI use policy

- Establish Accountability Protocols: Assign named surveyors for AI output decisions

- Review Insurance Coverage: Confirm professional indemnity insurance covers AI-assisted work

Ongoing Compliance Activities:

- Implement output validation protocols for all AI-assisted surveys

- Train staff on AI competencies and RICS requirements

- Establish client communication standards for AI transparency

- Monitor AI system performance and update risk registers

- Review and update policies annually

- Maintain documentation demonstrating compliance

Strategic Considerations:

- Evaluate whether AI investment aligns with firm strategy and client needs

- Consider competitive advantages from responsible AI implementation

- Assess opportunities for proprietary AI development with appropriate governance

- Engage with professional community to share experiences and best practices

The RICS AI standard positions the surveying profession to harness artificial intelligence's benefits while maintaining the professional judgment, accountability, and ethical standards that define chartered surveying. Surveyors who embrace these protocols will deliver enhanced services to clients while protecting professional reputation and meeting regulatory obligations in 2026 and beyond.

For surveyors seeking guidance on implementing these protocols or requiring professional surveying services that incorporate responsible AI use, expert chartered surveyors can provide comprehensive support ensuring compliance and service excellence.

References

[1] Ai Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[2] Responsible Use Of Ai – https://www.rics.org/profession-standards/rics-standards-and-guidance/conduct-competence/responsible-use-of-ai

[3] Responsible Use Of Artificial Intelligence In Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf

[4] Rics First Ever Standard On Responsible Ai Use Now In Effect – https://www.rics.org/news-insights/rics-first-ever-standard-on-responsible-ai-use-now-in-effect

[5] Rics Introduces Mandatory Ai Standard For Surveyors What Insurers And Their Clients Need To Know – https://cms.law/en/gbr/legal-updates/rics-introduces-mandatory-ai-standard-for-surveyors-what-insurers-and-their-clients-need-to-know

[6] Rics Global Standard Responsible Use Of Ai In Surveying Practice – https://www.rlb.com/europe/insight/rics-global-standard-responsible-use-of-ai-in-surveying-practice/